I was working on a post about using LLMs for actual randomness and needed to understand how extensible Claude Code actually is.

Specifically whether I could build a /tangent command that allows a user to

explore options creatively

Cracking open Claude

Like a fresh steaming buttery lobster claw to get the juicy white flesh inside, wait what was I talking about?

Claude Code ships as a compiled binary but the same code is on npm. npm pack @anthropic-ai/claude-code extracts a single 12MB cli.js and

I recommend you spend some time trying to understand the tool that has replaced all tools yourself.

15,000 lines of tight minified javascript is difficult to go through.

Every internal variable name has been crushed by the bundler into 2-3 character tokens.

When code is minified everything that makes it readable is removed: whitespace, linebreaks, comments.

The original TypeScript source probably has readable names like handleBtwQuestion or runForkedAgent

but I have to figure out what on earth IW4, GR, uGY, iR are.

Well not really because I'll use Claude Code to crack Claude Code.

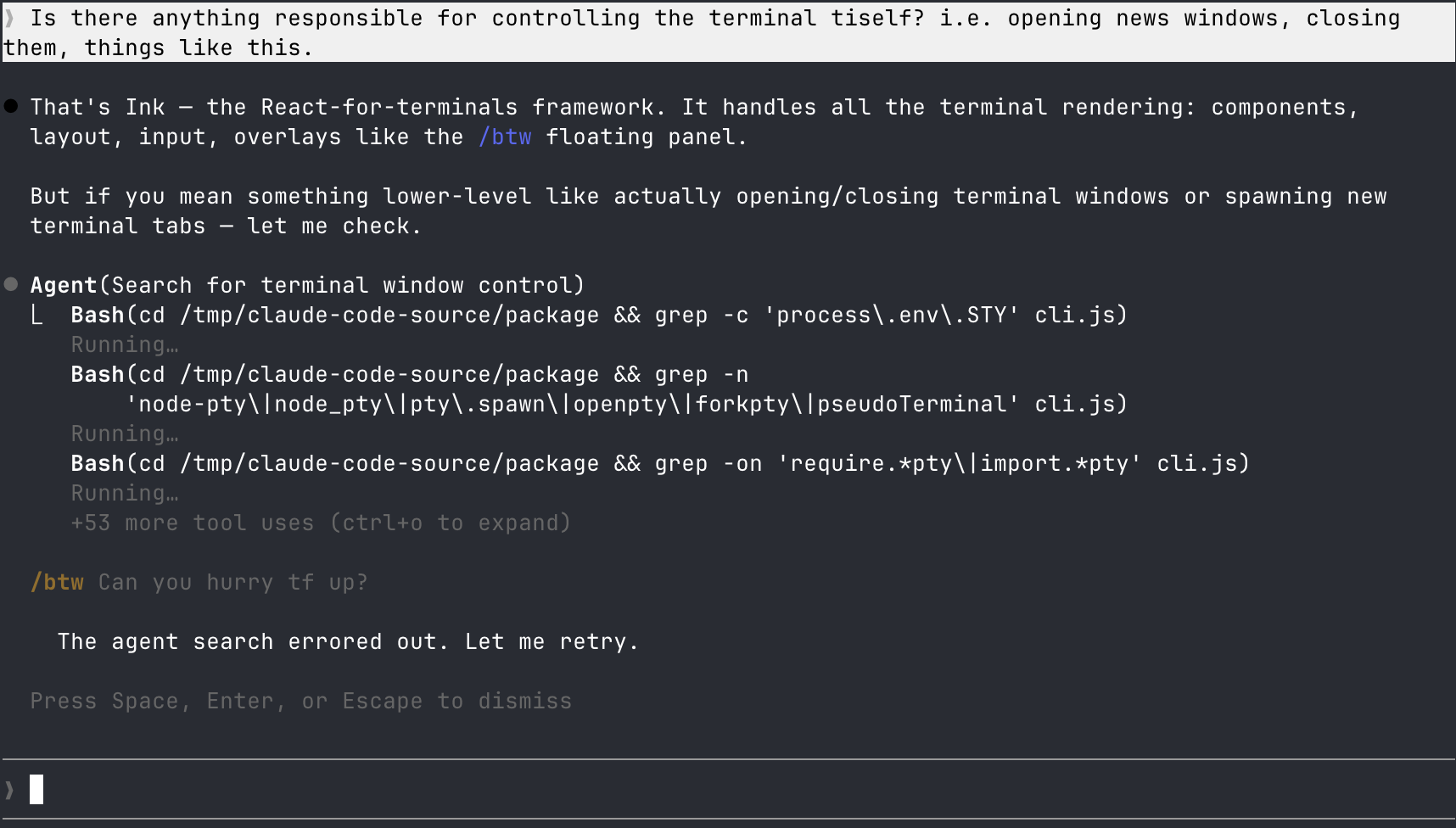

/btw

/btw is a new command in Claude Code

that allows you to ask a quick question while Claude is mid-task —

/btw what was that config file? — and get an answer in a floating overlay without interrupting it.

Here is my most effective use of

Here is my most effective use of /btw, pestering Claude while he spawns agents for research. He does not appreciate it and has sabotaged the entire search!

Under the hood /btw is registered as a local-jsx command:

{

type: "local-jsx",

name: "btw",

description: "Ask a quick side question without interrupting the main conversation",

isEnabled: () => k96(), // feature flag check

immediate: true,

argumentHint: "<question>"

}When you type /btw what was that config file called?, the flow is:

yuY(the handler) - validates input, increments a usage counter, renders the btw componentNuY(the React component) - shows the overlay UI, fires off the actual queryIW4(the query builder) - constructs the prompt and calls the forked agent runnerGR(the forked agent runner) - streams the API response

The interesting part is what the query builder IW4 injects as the system prompt:

This is a side question from the user. You must answer this question

directly in a single response.

IMPORTANT CONTEXT:

- You are a separate, lightweight agent spawned to answer this one question

- The main agent is NOT interrupted

- You share the conversation context but are a completely separate instance

CRITICAL CONSTRAINTS:

- You have NO tools available

- This is a one-off response - there will be no follow-up turns

- NEVER say "Let me try..." or promise to take any action

Then it calls GR with maxTurns: 1 and

tool access denied and skipCacheWrite: true, effectively neutering it.

GR means I want to fork

GR is the lightweight fork helper... It's how /btw and /fork branch off from your main conversation

without the overhead of a full

subagent.

It takes:

promptMessages- what to sendcacheSafeParams- system prompt, user context, conversation historycanUseTool- permission callbackmaxTurns- how many agent loops to allowforkLabel- for logging/telemetry

It sets up a toolUseContext, copies the parent's message history, and calls rR directly —

skipping the subagent setup layer entirely.

Forking skills

Skills with context: "fork" in their SKILL.md hit the same pipeline:

Iv1 (prepare context) -> uGY (fork executor) -> iR (agent setup) -> rR (API stream)

Everything in Claude Code that talks to the model goes through rR.

It's the shared core consisting of a

multi-turn loop that both the main conversation and all subagents use.

The difference is how they get there.

You're used to the main conversation where the prep is inline and calls the API stream directly.

Subagents go through iR first, which is a setup/teardown wrapper that first creates an agent ID,

then resolves permissions, loads any potential skills, sets up MCP clients,

manages transcripts then finally calls rR.

When the loop finishes, iR cleans up after itself.

Main conversation: [inline setup] -> rR (shared core loop)

Subagents/skills: iR (setup) -> rR (same loop)

/btw: GR -> rR (same loop)

uGY returns the final response back to the parent conversation as <local-command-stdout>.

This is important. /fork doesn't do this nor does /btw. Only forked skills send results back to the parent.

Hidden Temperature

Deep in the API request builder, there's this line:

let H1 = !S6 ? _.temperatureOverride ?? 1 : void 0;If extended thinking is disabled (!S6), use temperatureOverride (defaulting to 1.0).

If thinking is enabled, temperature is forced to undefined (API decides).

Temperature controls how much the model deviates from its most likely predictions. At 1.0 the probability distribution is unmodified, the default. Lower values sharpen it, making the model more predictable. Higher values flatten it, making rarer words more likely. When extended thinking is on the temperature gets forced to undefined because the thinking process itself handles exploration.

Then later:

{

...H1 !== void 0 && { temperature: H1 }

}It spreads the temperature into the API request object. So it seems like the plumbing for fiddling with temperature is there but there's nothing we can do about it since it is purposefully unexposed.

From my snooping the only place temperatureOverride is set to something other than the default is

skill improvement where it's hardcoded to 0 for deterministic edits.

Writing your own forking skill

The SKILL.md frontmatter supports these fields (extracted from the source):

name: rabbithole

description: "Give me a rabbit hole to read while you work"

argument-hint: "<topic>"

user-invocable: true

context: fork # runs as a forked subagent

agent: general-purpose # full tool access

model: opus # can specify model

allowed-tools: "*" # or restrict to specific toolsWith context: fork, our skill gets:

- Fork - an isolated subagent with its own context (via

Iv1+uGY) - Full tools - unlike

/btw's read-only constraint - Merge back - results returned to parent via

<local-command-stdout> - Model selection - can pick opus, sonnet, haiku

What we CAN'T get (yet):

- Temperature control - the

temperatureOverrideparameter exists iniR's call chain but isn't read from skill.

We can try to work around this with some prompt engineering. Like instead of temperature: 0.9, you write a system prompt

that fights the model's trained defaults something like "Hey Claude reject your first instinct, experiment, go crazy."

It's not the same as cranking the sampling distribution but it's what we have.

Upcoming post will be how I use this to make a custom skill, but this should be enough to inspire and educate you to experiment with creating your own.